Speedup algorithm: policy iterations

Recall the basic Algorithm for grid in 0723 and 0724:

(1) Set a grid consisting of k and k’ columnwise and rowwise respectively.

(2) Calculate utility for consumption as U using the grid matrix above.

(3) Starting from a certain v, update v1=U+beta*v’ so as to maximize v1.

(4) Repeat (3) by setting v=v1 for many times or until some criterion is met.

(5) Find the final corresponding value of k as k’ according to the maximum value.

These are based on value function iterations. Now we introduce policy iterations.

The additional algorithm is:

(a) Find the corresponding k’ from (3) in value function iterations.

(b) Calculate utility from k and k’ as r.

(c) Starting from a certain vp, update vp1=U+beta*vp where vp=(1/(1-beta))*r.

(d) Repeat the whole thing by setting vp=vp1 until some criterion is met.

In (c), we set u=r and solve

V=r+beta*V

for V and set it as Vp.

We perform value function iterations as they are and get k’ in each time step.

However, we see the convergence in terms of vp consisting of r calculated from k and k’.

The numerical policy iterations are slightly different from what we performed by hand.

The result must be the same as that by value function iterations with different number

of iterations.

We consider the following basic model:

![]() where

where ![]() and

and ![]()

as a starter.

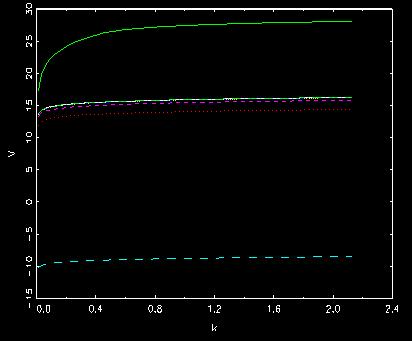

Behavior of value function

where it starts from the top and goes to the bottom and then shifts up to

the convergence within 10 times.

Notice that many programs mostly written by paper’s authors can not lead to the same

results as those by their plain versions. It means that they are wrongly implemented.